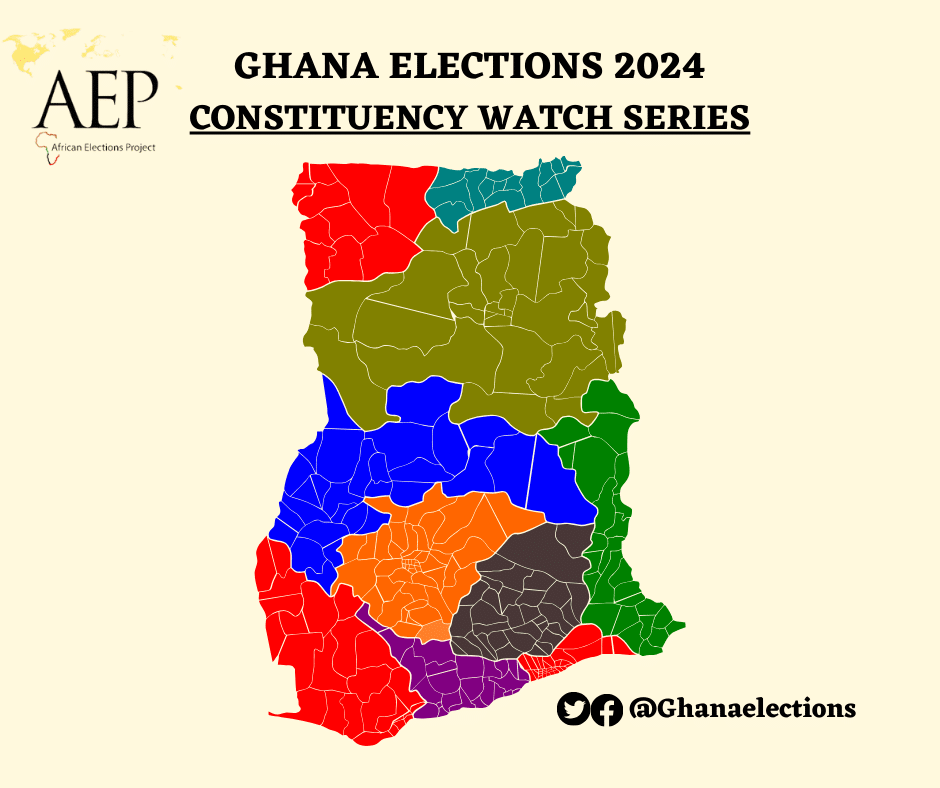

People searching for the term ‘fake news’ has increased since the November 2016 US Presidential election.

In 2014, Twitter said that 23 million of its users tweet automatically without human input – a figure that is expected to be even higher today; consequently increasing the rate at which false news is churned out.

Often, after a tragedy, rumors and false news stories about the event spread on the Internet.

The motivation for spreading fake news ranges from real beliefs in conspiracy theories to drawing in more website traffic to undermining mainstream media for political gains

Fake news and viral hoaxes spread better and easier online because of our short attention spans and the deluge of new information that’s constantly pouring into social media.

The truth doesn’t always prevail online.

Social media’s amplification of online misinformation emerges often during elections, although it’s still not clear exactly how much “fake news” influences an election’s outcome. With a majority of millennial getting news-on-the-go and from social media, understanding what makes false or mistaken ideas spread online is necessary.

Cutting down on information overload could help people distinguish real from fake news on social media, and one way to do that could be for companies like Facebook and Twitter to remove the bots that churn out lots of low-quality posts. But as Facebook’s strategy for dealing with disinformation revealed this year, that’s no small task.

The spread of false information has always been with us, but it is something we face every day in the digital age. At the core of the problem of misinformation is confirmation bias – we tend to seize on information that confirms our own view of the world, ignoring anything that doesn’t – and thus polarization.

In an environment where middlemen such as the mainstream media are now frequently removed, the public deals with a large amount of misleading information that corrupts reliable sources.

In such an environment, social media is obviously central and so is its ambiguous position: on the one hand it has the power to inform, engage and mobilize people as a sort of freedom utility; on the other, it has the power – and for some, unfortunately, the mission – to misinform, manipulate or control.

Fact-checking is a useful tool but, unfortunately, it remains confined in specific echo-chambers and might even reinforce polarization and distrust.

So thinking that fact-checking is a solution is kind of a confirmation bias itself. Indeed, debunking efforts or algorithmic-driven solutions, based on the reputation of the source, have proven ineffective so far. To make things more complicated, users on social media aim to maximize the number of likes their posts receive and often information, concepts and debate get flattened and oversimplified.

So can anything be done? Hopefully, yes – first, and as simple as it might sound, more collaboration between science and journalism is crucial. On one hand, scientists should communicate better with society. On the other hand, journalists should gain better qualifications to report on complex phenomena such as misinformation and its consequences, economic issues, technology and health.